Main topics on the origin of eyes

https://reasonandscience.catsboard.com/t2695-main-topics-on-the-origin-of-eyes

Otangelo Grasso

February 24, 2020, 4:08 AM

The Evolution of the Eye, Demystified

https://evolutionnews.org/2020/02/the-evolution-of-the-eye-demystified/?fbclid=IwAR3fgWoc02Xy0jgggCKa2TLLofsQkuSh3vUWf2xSNk73jU1iMJGzwiTJk2Y

Rhodopsin:

1. Rhodopsin proteins, the main players in vision, the visual cycle, the signal transduction pathway, the eye, eye-nerve, and visual cortex in the brain, and visual data processing are irreducible, integrated, systems, which each by themselves are irreducibly complex, but on a higher structural level, are interdependent, and work together in a joint venture to create vision.

2. If each of the mentioned precursor systems would evolve through a gradual emergence by slight, gradual modifications, these would result in nonfunctional subsystems. Since natural selection requires a function to select, these subsystems are irreconcilable with the gradualism Darwin envisioned.

3. But lets suppose that even if in a freaky evolutionary accident the individual subsystems would be generated, they would still have to be assembled into an integrated functional system of higher order, requiring meta-information directing the assembly process to produce the final functional system, that is genetic information to regulate parts availability, synchronization, and manage interface compatibility. The individual parts must precisely fit together.

4. All these steps are better explained through a super intelligent and powerful designer, rather than mindless natural processes by chance, or/and evolution since we observe minds having the capabilities to produce cameras, telescopes, and information processing systems.

https://reasonandscience.catsboard.com/t2638-volvox-eyespots-and-interdependence#5768

Visual data processing

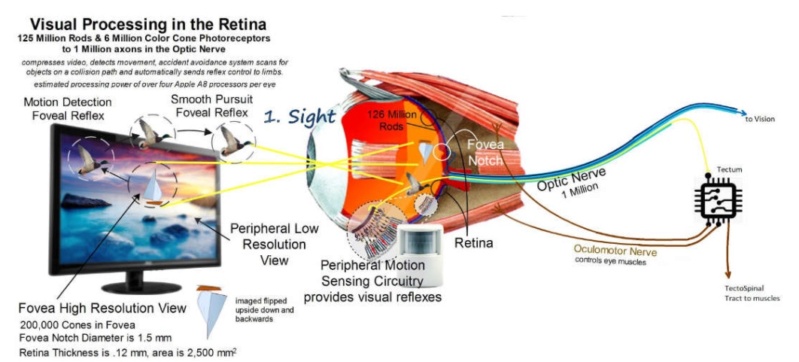

1. Sight is the result of complex data processing. Data input requires a common understanding between sender and receiver/retrieval of the data coding and transmission protocols.

2. The rules of any communication system are always defined in advance by a process of deliberate choices. There must be a prearranged agreement between the sender and receiver, otherwise, communication is impossible.

3. Light can be considered an encoded data stream that is decoded and re-encoded by the eye for transmission via the optic nerve and decoded by the visual cortex. The sophisticated control systems in the human eye processes images and controls the eye muscles with speed and precision. 2 Synchronized 576MP cameras. Data is multiplexed, that is multiple signals are combined into one signal to 1MP to enter the optic nerve. Then de-multiplexed in the brain. Chromatic aberration is corrected by software. 2 pictures processed together to generate a 3D model. The Brain processes that model, extracts data from millions of coordinates and then sends very precise control data back to the muscles, the iris, lens and so on.

4. Only a Master Designer could have imagined/conceptualized and implemented these four things—language, the transmitter of language, multiplexing and de-multiplexing the message, and receiver of language since all have to be precisely defined in advance before any form of communication can be possible at all.

https://reasonandscience.catsboard.com/t2404-wanna-build-a-cell-a-dvd-player-might-be-easier

Signal transduction pathway

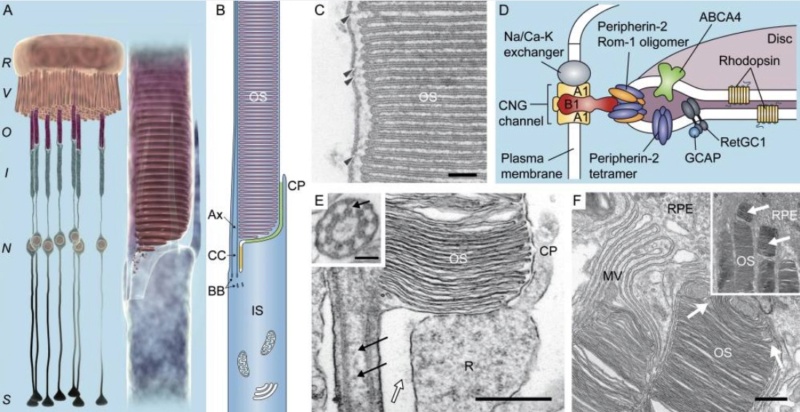

1. The signal transduction pathway is the mechanism by which the energy of a photon signals a mechanism in the cell that leads to its electrical polarization. This polarization ultimately leads to either the transmittance or inhibition of a neural signal that will be fed to the brain via the optic nerve.

2. The pathway must go through nine highly specific steps, of which anyone has no function unless the whole pathway is been go through.

3. Naturalistic mechanisms or undirected causes do not suffice to explain the origin of irreducibly complex systems.

4. Therefore, intelligent design constitutes the best explanation for the origin of the Signal transduction pathway

https://reasonandscience.catsboard.com/t1653-the-irreducible-complex-system-of-the-eye-and-eye-brain-interdependence#2569

The Visual Cycle

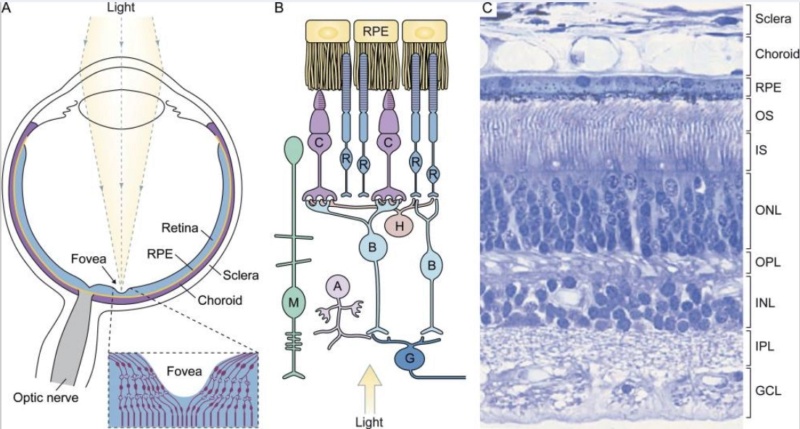

1. Continuous vision depends on the recycling of the photoproduct all-trans-retinal back to visual chromophore 11-cis-retinal. This process is enabled by the visual (retinoid) cycle, a series of biochemical reactions in photoreceptor, adjacent RPE and Müller cells.

2. Eight Proteins and parts in the vertebrate visual cycle are essential. If one is missing, the cycle is interrupted, and retinal cannot be restored back to chromophore 11-cis-retinal

3. Naturalistic mechanisms or undirected causes do not suffice to explain the origin of irreducibly complex systems.

4. Therefore, intelligent design constitutes the best explanation for the origin of the Visual Cycle

https://reasonandscience.catsboard.com/t1638-origin-of-phototransduction-the-visual-cycle-photoreceptors-and-retina#5742

Eye-brain interdependence

1. The vertebrate eye is a complex input device, and requires a reliable communications channel (the optic nerve) to convey the data to the central processing unit (the brain) via the visual cortex.

2. The eye, the optic nerve, and the visual cortex in the brain are three distinguished, interdependent parts to confer vision in vertebrates, of which none, individually, confer biological function.

3. None of the components of our visual system individually offer any advantage for natural selection.

4. Therefore, intelligent design constitutes the best explanation for the origin of the Eye-brain visual system.

https://reasonandscience.catsboard.com/t1653-the-irreducible-complex-system-of-the-eye-and-eye-brain-interdependence

Robert Jastrow:

The eye is a marvelous instrument, resembling a telescope of the highest quality, with a lens, an adjustable focus, a variable diaphragm for controlling the amount of light, and optical corrections for spherical and chromatic aberration. The eye appears to have been designed; no designer of telescopes could have done better. How could this marvelous instrument have evolved by chance, through a succession of random events? (1981, pp. 96-97).

Origin of eyespots - supposedly one of the simplest eyes

https://reasonandscience.catsboard.com/t2638-volvox-eyespots-and-interdependence#5768

How the origin of the human eye is best explained through intelligent design

https://reasonandscience.catsboard.com/t2411-how-the-origin-of-the-human-eye-is-best-explained-through-intelligent-design

The irreducible complex system of the eye, and eye-brain interdependence

https://reasonandscience.catsboard.com/t1653-the-irreducible-complex-system-of-the-eye-and-eye-brain-interdependencece

Volvox , eyespots, and interdependence

https://reasonandscience.catsboard.com/t2638-volvox-eyespots-and-interdependence

Origin of phototransduction, the visual cycle, photoreceptors and retina

https://reasonandscience.catsboard.com/t1638-origin-of-phototransduction-the-visual-cycle-photoreceptors-and-retina

Photoreceptor cells point to intelligent design

https://reasonandscience.catsboard.com/t1696-photoreceptor-cells-point-to-intelligent-design

Origin of phototransduction, the visual cycle, photoreceptors and retina

https://reasonandscience.catsboard.com/t1638-origin-of-phototransduction-the-visual-cycle-photoreceptors-and-retina

Is Our ‘Inverted’ Retina Really ‘Bad Design’?

https://reasonandscience.catsboard.com/t1689-is-the-eye-bad-designed

The human eye consists of over two million working parts making it second only to the brain in complexity. Proponents of evolution believe that the human eye is a product of millions of years of mutations and natural selection. As you read about the amazing complexity of the eye please ask yourself: could this really be a product of evolution?

Automatic focus

The lens of the eye is suspended in position by hundreds of string like fibres called Zonules. The ciliary muscle changes the shape of the lens. It relaxes to flatten the lens for distance vision; for close work it contracts rounding out the lens. This happens automatically and instantaneously without you having to think about it.

How could evolution produce a system that even knows when it is in focus? Let alone the mechanism to focus.

How would evolution produce a system that can control a muscle that is in the perfect place to change the shape of the lens?

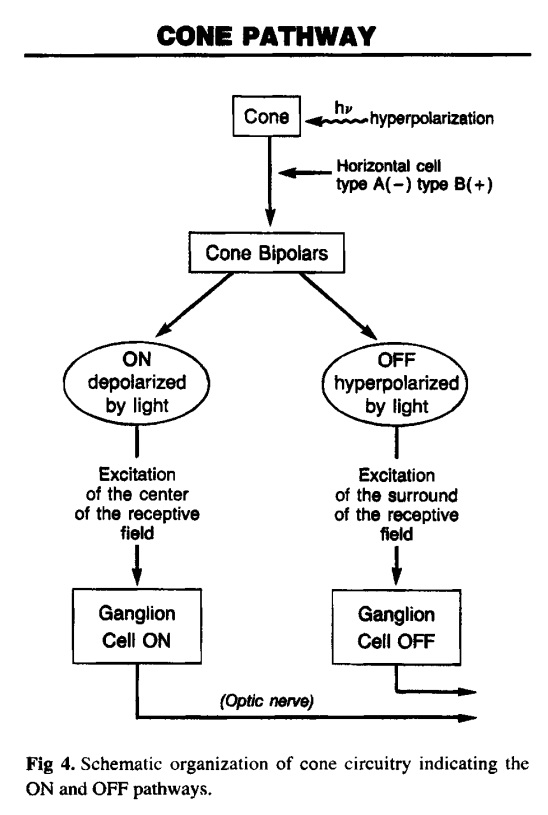

A visual system

The retina is composed of photoreceptor cells. When light falls on one of these cells, it causes a complex chemical reaction that sends an electrical signal through the optic nerve to the brain. It uses a signal transduction pathway, consisting of 9 irreducible steps. the light must go all the way through. Now, what if this pathway did happen to suddenly evolve and such a signal could be sent and go all the way through. So what?! How is the receptor cell going to know what to do with this signal? It will have to learn what this signal means. Learning and interpretation are very complicated processes involving a great many other proteins in other unique systems. Now the cell, in one lifetime, must evolve the ability to pass on this ability to interpret vision to its offspring. If it does not pass on this ability, the offspring must learn as well or vision offers no advantage to them. All of these wonderful processes need regulation. No function is beneficial unless it can be regulated (turned off and on). If the light sensitive cells cannot be turned off once they are turned on, vision does not occur. This regulatory ability is also very complicated involving a great many proteins and other molecules - all of which must be in place initially for vision to be beneficial. How does evolution explain our retinas having the correct cells which create electrical impulses when light activates them?

Making sense of it all

Each eye takes a slightly different picture of the world. At the optic chiasm each picture is divided in half. The outer left and right halves continue back toward the visual cortex. The inner left and right halves cross over to the other side of the brain then continue back toward the visual cortex.Also, the image that is projected onto the retina is upside down. The brain flips the image back up the right way during processing. Somehow, the human brain makes sense of the electrical impulses received via the optic nerve. The brain also combines the images from both eyes into one image and flips it up the right way… and all this is done in real time. How could natural selection recognize the problem and evolve the mechanism of the left side of the brain receiving the information from the left side of both eyes and the right side of the brain taking the information from the right side of both eyes? How would evolution produce a system that can interpret electrical impulses and process them into images? Why would evolution produce a system that knows the image on the retina is upside down?

Constant level of light

The retina needs a fairly constant level of light intensity to best form useful images with our eyes. The iris muscles control the size of the pupil. It contracts and expands, opening and closing the pupil, in response to the brightness of surrounding light. Just as the aperture in a camera protects the film from over exposure, the iris of the eye helps protect the sensitive retina. How would evolution produce a light sensor? Even if evolution could produce a light sensor.. how can a purely naturalistic process like evolution produce a system that knows how to measure light intensity? How would evolution produce a system that would control a muscle which regulates the size of the pupil?

Detailed vision

Cone cells give us our detailed color daytime vision. There are 6 million of them in each human eye. Most of them are located in the central retina. There are three types of cone cells: one sensitive to red light, another to green light, and the third sensitive to blue light.

Isn’t it fortunate that the cone cells are situated in the center of the retina? Would be a bit awkward if your most detailed vision was on the periphery of your eye sight?

Night vision

Rod cells give us our dim light or night vision. They are 500 times more sensitive to light and also more sensitive to motion than cone cells. There are 120 million rod cells in the human eye. Most rod cells are located in our peripheral or side vision. it can modify its own light sensitivity. After about 15 seconds in lower light, our bodies increase the level of rhodopsin in our retina. Over the next half hour in low light, our eyes get more an more sensitive. In fact, studies have shown that our eyes are around 600 times more sensitive at night than during the day. Why would the eye have different types of photoreceptor cells with one specifically to help us see in low light?

Lubrication

The lacrimal gland continually secretes tears which moisten, lubricate, and protect the surface of the eye. Excess tears drain into the lacrimal duct which empty into the nasal cavity.

If there was no lubrication system our eyes would dry up and cease to function within a few hours.

If the lubrication wasn’t there we would all be blind. Had this system not have to be fully setup from the beginning?

Fortunate that we have a lacrimal duct aren’t we? Otherwise, we would have a steady stream of tears running down our faces!

Protection

Eye lashes protect the eyes from particles that may injure them. They form a screen to keep dust and insects out. Anything touching them triggers the eyelids to blink.

How is it that the eyelids blink when something touches the eye lashes?

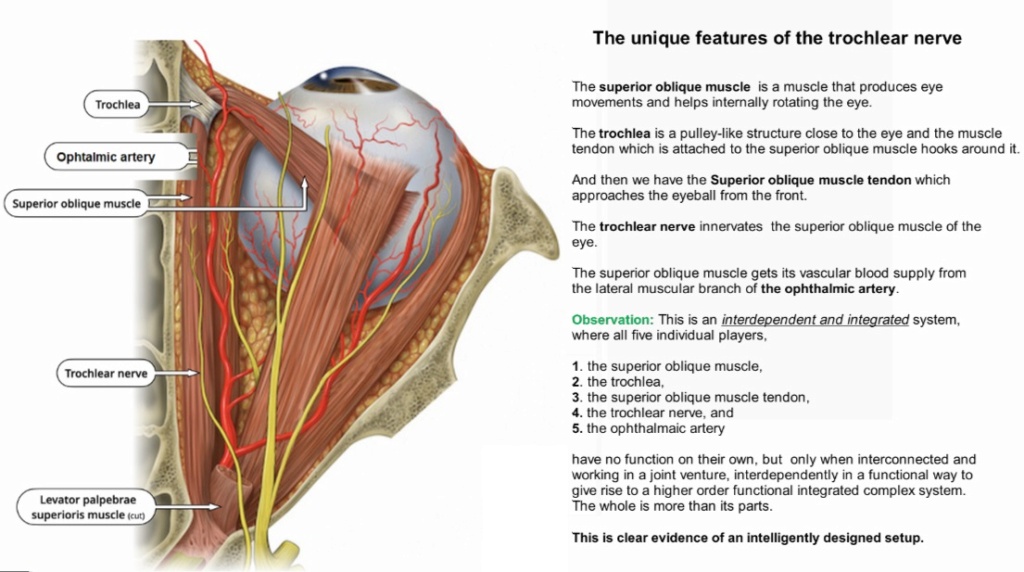

Operational structure

Six muscles are in charge of eye movement. Four of these move the eye up, down, left and right. The other two control the twisting motion of the eye when we tilt our head.

The orbit or eye socket is a cone-shaped bony cavity that protects the eye. The socket is padded with fatty tissue that allows the eye to move easily. When you tilt your head to the side your eye stays level with the horizon.. how would evolution produce this? Isn’t it amazing that you can look where you want without having to move your head all the time? If our eye sockets were not padded with fatty tissue then it would be a struggle to move our eyes.. why would evolution produce this?

Poor Design?

Some have claimed that the eye is wired back to front and therefore it must be the product of evolution. They claim that a designer would not design the eye this way. Well, it turns out this argument stems from a lack of knowledge.

The idea that the eye is wired backward comes from a lack of knowledge of eye function and anatomy.

Dr George Marshall

Dr Marshall explains that the nerves could not go behind the eye, because that space is reserved for the choroid, which provides the rich blood supply needed for the very metabolically active retinal pigment epithelium (RPE). This is necessary to regenerate the photoreceptors, and to absorb excess heat. So it is necessary for the nerves to go in front instead.

The more I study the human eye, the harder it is to believe that it evolved. Most people see the miracle of sight. I see a miracle of complexity on viewing things at 100,000 times magnification. It is the perfection of this complexity that causes me to baulk at evolutionary theory.

Dr George Marshall

Evolution of the eye?

Proponents of evolutionary mechanisms have come up with how they think the eye might have gradually evolved over time but it’s nothing more than speculation.

For instance, observe how Dawkins explains the origin of the eye:

Observe the words ‘suppose’, ‘probably’, ‘suspect’, ‘perhaps’ & ‘imagine’? This is not science but pseudo-scientific speculation and storytelling. Sure, there are a lot of different types of eyes out there but that doesn’t mean they evolved. Besides, based on the questions above you can see how much of an oversimplification Dawkins presentation is.

Conclusion

The human eye is amongst the best automatic camera in existence. Every time we change where we’re looking, our eye (and retina) is changing everything else to compensate: focus & light intensity is constantly adjusting to ensure that our eyesight is as good it can be. Man has made his own cameras… it took intelligent people to design and build them. The human eye is better than the best human-made camera. How is the emergence of eyes best explained, evolution, or design ?!

https://reasonandscience.catsboard.com/t2695-main-topics-on-the-origin-of-eyes

Otangelo Grasso

February 24, 2020, 4:08 AM

The Evolution of the Eye, Demystified

https://evolutionnews.org/2020/02/the-evolution-of-the-eye-demystified/?fbclid=IwAR3fgWoc02Xy0jgggCKa2TLLofsQkuSh3vUWf2xSNk73jU1iMJGzwiTJk2Y

Rhodopsin:

1. Rhodopsin proteins, the main players in vision, the visual cycle, the signal transduction pathway, the eye, eye-nerve, and visual cortex in the brain, and visual data processing are irreducible, integrated, systems, which each by themselves are irreducibly complex, but on a higher structural level, are interdependent, and work together in a joint venture to create vision.

2. If each of the mentioned precursor systems would evolve through a gradual emergence by slight, gradual modifications, these would result in nonfunctional subsystems. Since natural selection requires a function to select, these subsystems are irreconcilable with the gradualism Darwin envisioned.

3. But lets suppose that even if in a freaky evolutionary accident the individual subsystems would be generated, they would still have to be assembled into an integrated functional system of higher order, requiring meta-information directing the assembly process to produce the final functional system, that is genetic information to regulate parts availability, synchronization, and manage interface compatibility. The individual parts must precisely fit together.

4. All these steps are better explained through a super intelligent and powerful designer, rather than mindless natural processes by chance, or/and evolution since we observe minds having the capabilities to produce cameras, telescopes, and information processing systems.

https://reasonandscience.catsboard.com/t2638-volvox-eyespots-and-interdependence#5768

Visual data processing

1. Sight is the result of complex data processing. Data input requires a common understanding between sender and receiver/retrieval of the data coding and transmission protocols.

2. The rules of any communication system are always defined in advance by a process of deliberate choices. There must be a prearranged agreement between the sender and receiver, otherwise, communication is impossible.

3. Light can be considered an encoded data stream that is decoded and re-encoded by the eye for transmission via the optic nerve and decoded by the visual cortex. The sophisticated control systems in the human eye processes images and controls the eye muscles with speed and precision. 2 Synchronized 576MP cameras. Data is multiplexed, that is multiple signals are combined into one signal to 1MP to enter the optic nerve. Then de-multiplexed in the brain. Chromatic aberration is corrected by software. 2 pictures processed together to generate a 3D model. The Brain processes that model, extracts data from millions of coordinates and then sends very precise control data back to the muscles, the iris, lens and so on.

4. Only a Master Designer could have imagined/conceptualized and implemented these four things—language, the transmitter of language, multiplexing and de-multiplexing the message, and receiver of language since all have to be precisely defined in advance before any form of communication can be possible at all.

https://reasonandscience.catsboard.com/t2404-wanna-build-a-cell-a-dvd-player-might-be-easier

Signal transduction pathway

1. The signal transduction pathway is the mechanism by which the energy of a photon signals a mechanism in the cell that leads to its electrical polarization. This polarization ultimately leads to either the transmittance or inhibition of a neural signal that will be fed to the brain via the optic nerve.

2. The pathway must go through nine highly specific steps, of which anyone has no function unless the whole pathway is been go through.

3. Naturalistic mechanisms or undirected causes do not suffice to explain the origin of irreducibly complex systems.

4. Therefore, intelligent design constitutes the best explanation for the origin of the Signal transduction pathway

https://reasonandscience.catsboard.com/t1653-the-irreducible-complex-system-of-the-eye-and-eye-brain-interdependence#2569

The Visual Cycle

1. Continuous vision depends on the recycling of the photoproduct all-trans-retinal back to visual chromophore 11-cis-retinal. This process is enabled by the visual (retinoid) cycle, a series of biochemical reactions in photoreceptor, adjacent RPE and Müller cells.

2. Eight Proteins and parts in the vertebrate visual cycle are essential. If one is missing, the cycle is interrupted, and retinal cannot be restored back to chromophore 11-cis-retinal

3. Naturalistic mechanisms or undirected causes do not suffice to explain the origin of irreducibly complex systems.

4. Therefore, intelligent design constitutes the best explanation for the origin of the Visual Cycle

https://reasonandscience.catsboard.com/t1638-origin-of-phototransduction-the-visual-cycle-photoreceptors-and-retina#5742

Eye-brain interdependence

1. The vertebrate eye is a complex input device, and requires a reliable communications channel (the optic nerve) to convey the data to the central processing unit (the brain) via the visual cortex.

2. The eye, the optic nerve, and the visual cortex in the brain are three distinguished, interdependent parts to confer vision in vertebrates, of which none, individually, confer biological function.

3. None of the components of our visual system individually offer any advantage for natural selection.

4. Therefore, intelligent design constitutes the best explanation for the origin of the Eye-brain visual system.

https://reasonandscience.catsboard.com/t1653-the-irreducible-complex-system-of-the-eye-and-eye-brain-interdependence

Robert Jastrow:

The eye is a marvelous instrument, resembling a telescope of the highest quality, with a lens, an adjustable focus, a variable diaphragm for controlling the amount of light, and optical corrections for spherical and chromatic aberration. The eye appears to have been designed; no designer of telescopes could have done better. How could this marvelous instrument have evolved by chance, through a succession of random events? (1981, pp. 96-97).

Origin of eyespots - supposedly one of the simplest eyes

https://reasonandscience.catsboard.com/t2638-volvox-eyespots-and-interdependence#5768

How the origin of the human eye is best explained through intelligent design

https://reasonandscience.catsboard.com/t2411-how-the-origin-of-the-human-eye-is-best-explained-through-intelligent-design

The irreducible complex system of the eye, and eye-brain interdependence

https://reasonandscience.catsboard.com/t1653-the-irreducible-complex-system-of-the-eye-and-eye-brain-interdependencece

Volvox , eyespots, and interdependence

https://reasonandscience.catsboard.com/t2638-volvox-eyespots-and-interdependence

Origin of phototransduction, the visual cycle, photoreceptors and retina

https://reasonandscience.catsboard.com/t1638-origin-of-phototransduction-the-visual-cycle-photoreceptors-and-retina

Photoreceptor cells point to intelligent design

https://reasonandscience.catsboard.com/t1696-photoreceptor-cells-point-to-intelligent-design

Origin of phototransduction, the visual cycle, photoreceptors and retina

https://reasonandscience.catsboard.com/t1638-origin-of-phototransduction-the-visual-cycle-photoreceptors-and-retina

Is Our ‘Inverted’ Retina Really ‘Bad Design’?

https://reasonandscience.catsboard.com/t1689-is-the-eye-bad-designed

The human eye consists of over two million working parts making it second only to the brain in complexity. Proponents of evolution believe that the human eye is a product of millions of years of mutations and natural selection. As you read about the amazing complexity of the eye please ask yourself: could this really be a product of evolution?

Automatic focus

The lens of the eye is suspended in position by hundreds of string like fibres called Zonules. The ciliary muscle changes the shape of the lens. It relaxes to flatten the lens for distance vision; for close work it contracts rounding out the lens. This happens automatically and instantaneously without you having to think about it.

How could evolution produce a system that even knows when it is in focus? Let alone the mechanism to focus.

How would evolution produce a system that can control a muscle that is in the perfect place to change the shape of the lens?

A visual system

The retina is composed of photoreceptor cells. When light falls on one of these cells, it causes a complex chemical reaction that sends an electrical signal through the optic nerve to the brain. It uses a signal transduction pathway, consisting of 9 irreducible steps. the light must go all the way through. Now, what if this pathway did happen to suddenly evolve and such a signal could be sent and go all the way through. So what?! How is the receptor cell going to know what to do with this signal? It will have to learn what this signal means. Learning and interpretation are very complicated processes involving a great many other proteins in other unique systems. Now the cell, in one lifetime, must evolve the ability to pass on this ability to interpret vision to its offspring. If it does not pass on this ability, the offspring must learn as well or vision offers no advantage to them. All of these wonderful processes need regulation. No function is beneficial unless it can be regulated (turned off and on). If the light sensitive cells cannot be turned off once they are turned on, vision does not occur. This regulatory ability is also very complicated involving a great many proteins and other molecules - all of which must be in place initially for vision to be beneficial. How does evolution explain our retinas having the correct cells which create electrical impulses when light activates them?

Making sense of it all

Each eye takes a slightly different picture of the world. At the optic chiasm each picture is divided in half. The outer left and right halves continue back toward the visual cortex. The inner left and right halves cross over to the other side of the brain then continue back toward the visual cortex.Also, the image that is projected onto the retina is upside down. The brain flips the image back up the right way during processing. Somehow, the human brain makes sense of the electrical impulses received via the optic nerve. The brain also combines the images from both eyes into one image and flips it up the right way… and all this is done in real time. How could natural selection recognize the problem and evolve the mechanism of the left side of the brain receiving the information from the left side of both eyes and the right side of the brain taking the information from the right side of both eyes? How would evolution produce a system that can interpret electrical impulses and process them into images? Why would evolution produce a system that knows the image on the retina is upside down?

Constant level of light

The retina needs a fairly constant level of light intensity to best form useful images with our eyes. The iris muscles control the size of the pupil. It contracts and expands, opening and closing the pupil, in response to the brightness of surrounding light. Just as the aperture in a camera protects the film from over exposure, the iris of the eye helps protect the sensitive retina. How would evolution produce a light sensor? Even if evolution could produce a light sensor.. how can a purely naturalistic process like evolution produce a system that knows how to measure light intensity? How would evolution produce a system that would control a muscle which regulates the size of the pupil?

Detailed vision

Cone cells give us our detailed color daytime vision. There are 6 million of them in each human eye. Most of them are located in the central retina. There are three types of cone cells: one sensitive to red light, another to green light, and the third sensitive to blue light.

Isn’t it fortunate that the cone cells are situated in the center of the retina? Would be a bit awkward if your most detailed vision was on the periphery of your eye sight?

Night vision

Rod cells give us our dim light or night vision. They are 500 times more sensitive to light and also more sensitive to motion than cone cells. There are 120 million rod cells in the human eye. Most rod cells are located in our peripheral or side vision. it can modify its own light sensitivity. After about 15 seconds in lower light, our bodies increase the level of rhodopsin in our retina. Over the next half hour in low light, our eyes get more an more sensitive. In fact, studies have shown that our eyes are around 600 times more sensitive at night than during the day. Why would the eye have different types of photoreceptor cells with one specifically to help us see in low light?

Lubrication

The lacrimal gland continually secretes tears which moisten, lubricate, and protect the surface of the eye. Excess tears drain into the lacrimal duct which empty into the nasal cavity.

If there was no lubrication system our eyes would dry up and cease to function within a few hours.

If the lubrication wasn’t there we would all be blind. Had this system not have to be fully setup from the beginning?

Fortunate that we have a lacrimal duct aren’t we? Otherwise, we would have a steady stream of tears running down our faces!

Protection

Eye lashes protect the eyes from particles that may injure them. They form a screen to keep dust and insects out. Anything touching them triggers the eyelids to blink.

How is it that the eyelids blink when something touches the eye lashes?

Operational structure

Six muscles are in charge of eye movement. Four of these move the eye up, down, left and right. The other two control the twisting motion of the eye when we tilt our head.

The orbit or eye socket is a cone-shaped bony cavity that protects the eye. The socket is padded with fatty tissue that allows the eye to move easily. When you tilt your head to the side your eye stays level with the horizon.. how would evolution produce this? Isn’t it amazing that you can look where you want without having to move your head all the time? If our eye sockets were not padded with fatty tissue then it would be a struggle to move our eyes.. why would evolution produce this?

Poor Design?

Some have claimed that the eye is wired back to front and therefore it must be the product of evolution. They claim that a designer would not design the eye this way. Well, it turns out this argument stems from a lack of knowledge.

The idea that the eye is wired backward comes from a lack of knowledge of eye function and anatomy.

Dr George Marshall

Dr Marshall explains that the nerves could not go behind the eye, because that space is reserved for the choroid, which provides the rich blood supply needed for the very metabolically active retinal pigment epithelium (RPE). This is necessary to regenerate the photoreceptors, and to absorb excess heat. So it is necessary for the nerves to go in front instead.

The more I study the human eye, the harder it is to believe that it evolved. Most people see the miracle of sight. I see a miracle of complexity on viewing things at 100,000 times magnification. It is the perfection of this complexity that causes me to baulk at evolutionary theory.

Dr George Marshall

Evolution of the eye?

Proponents of evolutionary mechanisms have come up with how they think the eye might have gradually evolved over time but it’s nothing more than speculation.

For instance, observe how Dawkins explains the origin of the eye:

Observe the words ‘suppose’, ‘probably’, ‘suspect’, ‘perhaps’ & ‘imagine’? This is not science but pseudo-scientific speculation and storytelling. Sure, there are a lot of different types of eyes out there but that doesn’t mean they evolved. Besides, based on the questions above you can see how much of an oversimplification Dawkins presentation is.

Conclusion

The human eye is amongst the best automatic camera in existence. Every time we change where we’re looking, our eye (and retina) is changing everything else to compensate: focus & light intensity is constantly adjusting to ensure that our eyesight is as good it can be. Man has made his own cameras… it took intelligent people to design and build them. The human eye is better than the best human-made camera. How is the emergence of eyes best explained, evolution, or design ?!

Last edited by Otangelo on Mon Aug 22, 2022 11:58 am; edited 19 times in total